What Were You Doing 24 Years Ago Today? December 31, 2023

Posted by mwidlake in development, humour, Testing, working.Tags: behaviour, Humour, system development, technology, Y2K

4 comments

Yes, I know, some of you were still at school 24 years ago, a couple of you were not even born! But for many of you, 24 years ago today you hasd a job in I.T, it was the last day of the 20th century, December 31st 1999 – and you were either at work or on call!

Those of us in the I.T. industry at the time remember not only the media frenzy over the “Y2K” bug, where computer systems were predicted to crash because two-digit year dates had been stored not four, but also the shear tedium of fixing these systems or proving they would not crash (by doing that old-school thing called “testing”).

I did my fair share of that work, it almost seemed that all IT contract work in 1998 and 1999 was about the Y2K bug. I knew of several large development or hardware refresh projects that were cancelled or put on hold so that Y2K work could be done instead. A couple of the systems I worked on at the time would definitely have had issues without the remedial work, a couple would have not. In the end Y2K in computer systems was almost a non-event, some systems crashed or went wrong but most did not. And, of course, because the issue was not as bad as the end-of-the-world media made out it would be, there were loads and loads of people afterwards claiming it was all a hoax. Probably the same people who claim Covid-19 is just a ‘flu and the moon landings did not happen 😊. They were oblivious to just how much money and resource was spent fixing the issue.

In any case, the end result for many of us I.T people was that more (though dull) work was availabe on the run-up to the day and on the 31st December 1999 itself we were forced to be in an office or on-call & (theoretically) sober in order to handle any missed issues. While 95% of the world was partying and 4% were in their basement surrounded by 2 thousand tins of soup and bottles of water (waiting for world catastrophe or some religious thing or both), we were supposed to be in a fecking office.

Were you?

I wasn’t. I was in a big country house with a load of friends, having a good time. You see, when I took on the role that I was still in come 31st December 1999, I had put a clause in my contract that I would be unavailable the week that the Y2K night fell in. Other technical people in the company had booked holiday for then, but it nearly all got cancelled (I noticed at the time this did not seem to apply to senior management). But it was in my contract so, though they tried to bully me, their only real option would have been to fire and re-hire me (which they tried to make happen). Just occasionally, I would actually plan ahead and make a smart move. Occasionally.

Don’t Forget Old Features – Materialized Views #JoelKallmanDay October 11, 2023

Posted by mwidlake in Architecture, database design, development, Knowledge, performance, SQL.Tags: JoelKallmanDay, Materialized Views, performance, SQL, system development, user group

add a comment

People who spread knowledge on technical subjects tend to focus very much on the new, shiny features and enhancements. Maybe as a result of this, old features get forgotten about – like Table Clusters. How many of you know what Oralce Table Clusters are and how they might improve performance? Who has used Table Clusters in Oracle in the last 20 years? (Apart from everyone – as the data dictionary still uses them!). Table Clusters are maybe worth leaving in the past as, apart from the niche use of Single Table Hash Clusters to give a very efficient selection path for a row in a static table via the PK, they generally have more issues than benefits I think.

Well, an old feature I do think is worth revisiting is Materialized Views. This is where you create a real table based on a SQL query. The SQL query can be simple or complex, it can join two or more tables together, it can even group data and select min, max, avg, analytical funcitons etc to summaries large data sets.

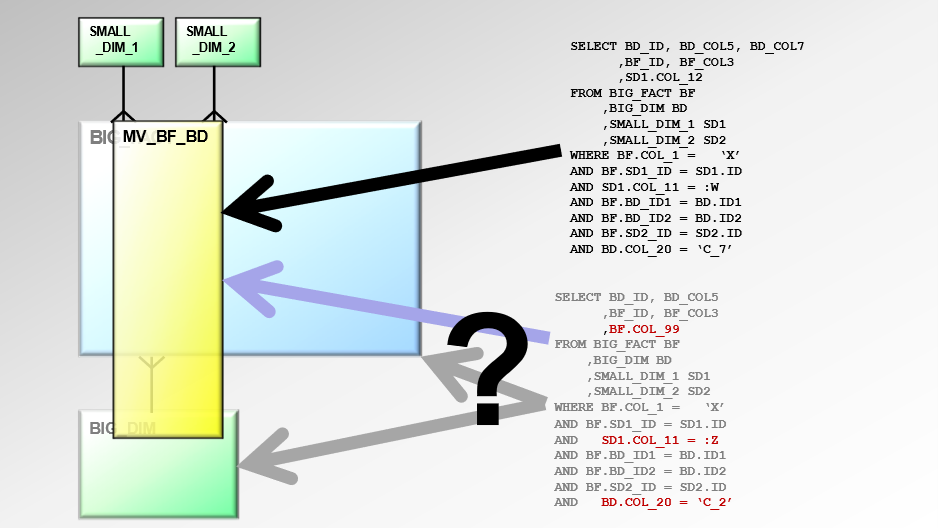

Consider the below query:

A Materialized View can be created that does the main, expensive join and includes SOME of the filters:

This query iu run and the yellow “MV_BF_BD” table is physically created and populated. It is “Just” a table.

If the data in the underlying tables change, the Materialized View is marked as unusable and won’t be used. Used for what?

If the SQL statement used to create the Materialized View is a SQL statement the application uses or, more significantly, is a common part of several larger, more complex SQL statements, Oracle can use the Materialized View (which is just a table remember) to help satisfy the query.

In the below we see two SQL statements. One will certainly use the Materialized View and will, as a result, run more quickly. The second query might use the MV, the opitimizer will decide.

If this Materialized View takes a lot of time or resource to be generated (say it joins a million row table to a 100 millon row table and then filters out 50% of the joined rows) then by generating it once and then using the RESULTS only in half a dozen queries then it will make that half a dozen queries run faster.

I know I have not explained Materialized Views in enough detail to help you use them (though I might come out of retirement to write about them based on real world experience I had with one of my last clients), but that is not my point, it is just an example of something that can really help certain Oracle systems run more efficiently. But is hardly used anymore, possibly as it is old and not talked about much.

Just because an Oracle feature is old, it does not mean it is not still of use. How do you find out about these features? Well, I have a load of old performance and database design manuals I am throwing out that you could buy… 😄. Being serious, go have a look at the concepts manual. I used to always recommend all DBA-types and Oracle Developer types read the concepts manual for their version of Oracle. I still think it is a good idea (though I have not done it for a few years as, in my opinion, the manuals got a bit crap for a while – they seem better now). Alternatively, go to speak with someone really experienced (and thus probably quite old, but there are exceptions), describe the issue you have, and they might say something like “ohhh, that sounds like Results Cache could help – but be aware of these 3 terrible drawbacks of them”.

This post is in honour of Joel Kallman, who was a tireless advocate of learning & community. Just like him, I think the more we share our knowledge and help each other, the better we will all become.

Really Simple Naming Standard To Simplify Everything November 29, 2022

Posted by mwidlake in Architecture, database design, development, PL/SQL, SQL.Tags: PL/SQL, SQL, standards, system development

5 comments

Many years ago I came up with a way of naming indexes, variables, table aliases, and several other things that is incredibly simple and, for me at least, Just Works. This might not sound like a big deal but it’s one of the key things that has really helped me create readable & easily understandable code, consistent naming for objects, and database designs that make a lot of sense just by looking at them.

Not only that, but this naming convention also saves me a lot of time & mental effort. Itallows me to apply most of my limited brain capacity on making my SQL & PL/SQL code work, and not on stuff that ultimately should not matter – like what to call a variable.

The Starting Point

We generally name our tables after the objects or data they hold, so they are called PERSON, ORDER, ORDER LINE, ADDRESS, INPATIENT_STAY, and for intersection entities they are called things like INPATIENT_BED_USAGE, EMPLOYEE_ROLE. Anyone looking at a database like this will get the general idea of what is in each table and how some tables relate to each other. That’s all good.

Naming columns is generally easy too. FIRST_NAME, LAST_NAME, HOUSE_NUMBER, HOUSE_NAME, ORDER_DATE but some columns are more generic like ID or UNIQUE_ID for a system-generated primary key. START_DATE & END_DATE, CREATED_DATE, STATUS all generally makes sense within the table. A lot of places have some simple standards for column names, such as to name DATE columns so that you indicate if it has a time element (ADMITTED_DATETIME), or that if it is an inherited key from a parent table, that is indicated in the name (CUSTOMER_ID, CUST_ID, CTSMR_ID… hmm, maybe this is where issues start occurring).

Table and column names are generally defined once, mostly when the application is first designed (please tell me you at least design you schema at the start) and then groups of new ones are introduced when new functionality is added.

But then you need to come up with things like index names or what your variable names will be in code and often there are no standards for that. This can result in the awful use of T1,T2. T3 for table aliases and variables A,B,C etc. You look at such code and spend ages working out what table is T7, what is the variable F for?

This lack of standards and thus consistency can also be true of those columns inherited from the other end of a foreign key, which is why some database end up with a load of columns with different names linking to the same table (CUSTOMER_ID, CUST_ID, CTSMR_ID etc).

So try this:

Naming Secondary Objects

This is the important bit -You use a short (general 4 letter) acronym or alias based on the leading letters of the words in the parent object name.

If name of the parent object is one word, like PERSON then the alias is the first four letters – PERS

If the name of the parent object is two words like PESON_NAME then the alias is the first two letters of the two words – PENA

If the name of the parent object has more than two words like PATIENT_CASENOTES_FRONTSHEET then the alias is the first letter of each word – PCF. A long table like REGIONAL_SALES_SUMMARY_BY_MONTH would be RSSBM (these are true acronyms)

Apply this naming convention takes no thought and no decision making in 99% of cases. And, especially once you have been looking at the system for a couple of hours, the aliases make total sense:

You get the idea.

You might have wanted to use ORD as the alias for ORDER, as it reads better or you are used to it being reduced to ORD, but stick to the rules. As soon as you break the rules you start losing the benefit as you have to remember the exceptions – and mistakes are likely to happen “further down” the naming hierarchy. Over the years I have been using this method it is when I am less firm about applying them rigorously that I get less benefit. But see the end of this post for what you do when you are forced to make exceptions.

Using The Alias

The first thing you do with the alias is use it in column names. I don’t like having a column called simply “ID” for a system generated unique identifier. It means you have loads of columns in the data dictionary called ID and it makes looking up information on the column a bit more clunky. In many systems I’ve seen a real mix of using full_table_name_id, whitespaceremoved_id, part_of_nme_id. Not any more, now you can have a rule that it is always tablealias_id. Similarly the primary key created on a table is tablealias_pk.

Foreign keys? It’s tablealiasfrom_tablaliasto_fk so the foreign key from the PERSON table to their current ADDRESS is PERS_ADDR_FK. If things are a little more complex such as having links from a CUSTOMER table to a business’s head office address and delivery address you might amend the relevant column names to include a brief descriptor of the address type, and then use the alias rules for that descriptor too, as I show below:

The name of the sequence for generating a unique identifier for the CATALOGUE table? CATA_SEQ { I don’t know why people insist on putting SEQ or similar in the name of sequences, it just seems almost universal…}

I also use this naming convention for my indexes. What do you think the below index is on?

PERS_SENA_FINA_DOB

Yes, SECOND_NAME, FIRST_NAME, DOB on the PERSON table. If I see that index name in an explain plan I know immediately what columns are involved on what table. Now, if I was being lazy that index might be SN_FN_DOB and in some ways it is easier to read, people will know what SN, FN, and DOB stand for – but once you start breaking the standard then you have to make a decision when adding indexes if you stick to the rule or not, you get more situations where you might not be sure which column SN really is for (OK, maybe not in this case).

Simplification Of Code

Where this all really comes into it’s own is naming variables and table aliases in code. I strongly believe any production-quality SQL should always use table alias and the use of T1, T2, T3 or A, B.C etc for them should be punishable with chilli juice in the left eye. With the naming method I describe here you no longer have to think about variable names at all and it makes the code incredibly readable.

I did not have to think about the table alias when I wrote the below code. I did not have to check what I had set them to when I used them in the code. Anyone looking at the code can see that the table aliases mean something and it makes it clear what each column is, what table it is from, even if the actual column name is a little ambiguous. With alias of A, B, C or T2134, T3341 etc you endlessly have to keep check which table (or subquery) is being referenced.

(please don’t critique the code or tell me about bugs or *anything*, it’s just a stupid example I knocked up in a text editor to make the point, OK?)

I use the same alias method for naming variables in PL/SQL too, as then I don’t need to think much about the names of many of them. The below is a sample of some PL/SQL code, using a lot of variables. I have a standard batch processing architecture using the tables PROCESS_MASTER, PROCESS_RUN and PROCESS_LOG. What to call the package level record variables derived from them? I prefix all package variables “PV” and record variable are suffixed with “REC” (not RECO, it’s not derived from an object name is my excuse) so they are PV_PRRU_REC & PV_PRMA_REC, no thought needed. And once you spot the other three table names referenced in the code, the other variables make sense. For as long as you look at the code you’ll know what TONA, RONA and ROTY are referring to:

Pesky Issues

The most common drawback of the “four letter alias” (as I inaccurately think of it) is alias clashes. This tends to happen most with table names that are two words long. CONSULTATION_DEPENDENCIES and COUNTRY_DETAILS would both have an alias of CODE. So you pick one and make the alias the first letter of the first word, three letter of the second, so COUNTRY_DETAILS gets an alias of CDET. In my experience of using this naming convention for, oh, 25 years I’ve only had a few clashes and the 1:3 change in the letter pattern has worked.

The other drawback is getting an alias that is not, let us say, acceptable in polite company. AREA_SERVICES was an early one. SOFTWARE_HARDWARE_INTEGRATION_TESTS was another, but I strongly suspect that was an aimed-for alias by someone who was not happy in their role. Again, the swapping to a 1:3 letter derivation cures the problem.

There are usually so few exceptions that you are forced to introduce that everyone involved on the system gets to know them very easily.

When I was working on a very, very large project for an energy company I got them to start using this naming convention and people did get really quite worked up about the potential for alias clashes so they introduced a reference table that you populated with all the table names and it would generate the alias and, if it clashed with an existing one it would swap to 1:3, then 3:1 if it again clashed, then raise an error for manual intervention. I always felt they had taken things a little too far but you can see that the nameing convention can be 99% automated.

SUMMARY

Generate a short alias for all objects by taking the first letter(s) of the object name.

Use that in column, constraint, index, and sequence names.

Now uses the alias in your code for table aliases and variables.

Greatly reduce both the time you spend coming up with names for these things, and the potential for code confusion.

Spend that time and brain effort on making the actual code do what it is supposed to do and go home early.

Friday Philosophy – Early Mistakes Uncorrected Means Poor Final Systems August 13, 2021

Posted by mwidlake in Architecture, development, Friday Philosophy.Tags: DIY, Humour, system development

4 comments

A few days ago I fitted a sink (hand basin) in my garage. Bear with me, this IS related to I.T.

It’s maybe is not so clear from the pictures, but that sink is at an angle (see the spirit level in the second shot, that bubble is way over from being in the centre), leaning back towards the wall on the left. And the splash back is also not straight, it is a good 5 degrees from the vertical. I’m pretty good at DIY – putting up shelves, building cupboards, fixing furniture, building the odd bit of wall, erecting & insulating sheds (which I take power and lighting down to)… And I am very, very meticulous about things like getting things straight and level. As I progressed with the job of putting up this sink and reflected on the process, it so reminds me of several application development projects I’ve been involved in (or was brought in to help crowbar back into shape)

Where Did I Go Wrong?

I’ll add some pictures to the bottom of this blog, but for now I’ll just describe what happened. When I offered up the sink to the wall I realised that the pipework (put in by competent builders but with no real consideration of my planned improvements) was exactly where the sink should go. And I also realised that even if the sink was put higher (way too high for me) or lower (well to low for a normal heighted person) the pipework would still be an issue. Also, the back wall is not quite vertical and the two walls are not quite at 90 degrees. This sort of thing is not unusual in any building (though slightly vexing in something built only 12 years ago) and to me it’s like the not-quite-perfect situation most application developments start at. You might not be using the best database for the solution, you might have to use a computer language that is not the best but you have staff skilled in it. There will be bizarre parts of the application you are replacing that you have to keep as the end users have got used to them – or even built business processes around and no one wants to change those now.

Good developers and systems engineers can work around such issues. A good DIYer can take into account badly placed pipes and wonky walls. I could have cut out and replaced the pipework – but it would have been a lot of work and I am not very good at plumbing (which is why I use push-fit plumbing: less neat & more expensive, but dead easy & quick). This would be like changing those slightly crazy business practices forced by the old system for ones that would benefit the business eventually, but it’s a lot of effort to change. As for the wonky walls, sorting that out would be like re-skilling or replacing my whole development team to move to better technology – Possible, but not going to happen.

The pragmatic solution was to mount the sink on wooden battens and make the battens thick enough to avoid the pipework and allow me to correct for the wonky walls. A cheap, pragmatic solution to the imperfect starting conditions.

Only, I messed up. I spent hours first doing the extra plumbing work to get an isolation valve beyond where the sink & splashbacks would be (so the water could be turned off for maintenance or leaks), and a feed for the hot water unit to come. Then I failed to plane off the battens for the sink properly and they had to come off and be messed with several times. When it came time to mount the sink, which goes on two metal pegs, I screwed one of them in too high. Bloody hell.

I was tired, annoyed. To fix the peg I would have to replace the batten. I did not want to take that sodding batten off the wall and replace it (it had been the hard one to make). It was also the end of the day. So I did a “bodge job” – which means a quick and poor quality alteration to get you past a problem. I packed out the space between one mounting peg and a guessed proper height for the sink. I replaced proper planning and good development with something you throw together at the end of an Agile Sprint, so I could meet my objectives and go home.

The next morning I realised the sink was more than a little uneven – but I decided to continue. And that was it, I had messed up the whole job. In my head I thought I could modify the upright battens, get a little leeway with the tiling and grouting and make up for the “bodge job”. I won’t bore you with the full details but I was so utterly wrong. What would have been two totally square and vertical battens for one splashback and a pair of slightly trimmed for the other turned into endless alterations and re-working, especially as towards what should have been the end of the job, it was obvious the tiles were going to look awful (good tiling is all about straight, parallel, horizontal, well squared lines – they magnify imperfections, of which I now had many) so more reworking was required.

If I had fixed the sink mounts first thing that morning, I think I would have finished the job in that same morning, not one and a half days later.

It’s Not The Starting Conditions, It’s The Cutting Corners

The more I have thought about that sink, the more it echoes what I’ve seen in the world of computer application development.

Many times, when the development of an application has gone wrong, I hear people blame the starting conditions. “We had to use Java Struts” or “The analytics team demanded they could query anything” or “We had to make the system talk to the old warehouse system on Informix” or whatever. I doubt these starting conditions were really a big problem, you knew about them and your design should have taken them into account. A good designer will plan around them, a good developer will code in an interface, a good DBA will add in the required indexes.

Not properly planning the system or not prototyping the solution will invariably lead to disaster. In my sink scenario, that would have been the case if I had not used my experience and thought about the long term and decided to try to attach the sink to the plasterboard walls directly, just under the pipework. I’d never have got the tiles on and the sink would have fallen off the wall very soon. I’m not saying you don’t see this failure to plan upfront in application development, it has sadly become more common. Too many places think “Agile” or similar is just hacking the system together and fixing what is wrong. That is, to use a coarse term, just bollocks. Agile only works well (in my experience) when done on top of a very good design, created by skilled people. It’s not easy.

However, the most common cause of issues I have witnessed, by a long way, is that of “bodging a solution” – and again it is very strongly associated in my experience with “agile” done wrong, or even worse the “develop to fail – and then fix quickly” mentality. Yes, you will get something that works. Very quickly. And it will probably be an “uneven sink”. It will do the job (hold water, let people register for an event) but as soon as you try and extend the system, it gets harder and harder and harder. And you spend more and more time working through that technical debt you inflicted on yourself. And the end result will be very much sub-optimal. OK, a simple thing will be fine when put together like this (a shelf to hold photographs, a simple calendar app) but a complicated system like a hospital patient administration system or a fraud detection application, a whole bathroom refurbishment… It’s going to be a mess. I’ve seen and suffered from this cascade of crap so many times over the years.

The whole idea of things like sprints, where you are put under tremendous pressure to just make your bit work, causes a lot of bodging of things. Yes, it does, I’ve seen it. I’m sure if things like code review, pair development, and periods of planning are put into Agile (like they are supposed to be) the bodges could be un-bodged. But I simply don’t see this. And the whole “fail fast and fix” idea seems to me to absolutely guarantee a system covered in duct tape and almost unmaintainable. All for the sake of get it done NOW.

I learnt many years ago in I.T. that it is far more efficient if you fix the mistakes when you first see them and fix them well. Always build on a stable base and keep it solid. Otherwise, as you progress, the pain just builds and builds and builds. This latest DIY experience just hammered it home.

The sink I put up does work. None of the pipework leaks, the splashbacks do not have gaps to let water in, the tiles are aligned (mostly), they are high enough to catch splashes. I know I can put the water heater above it… but it will be higher than I would want and if I do not make it lean to the left, it will make the sink look even more skew whiff. But by the end I put in twice the effort (if not more) than I needed to in order to have a functional sink – and it looks weird.

Do not bodge the beginning of a project, make it right and save yourself from endless pain later on!

For the interested, a pictorial progress of the job.

Friday Philosophy – Sometime The Solution Has To Not Only Match The Problem But Also… August 4, 2017

Posted by mwidlake in Architecture, development, Friday Philosophy, Perceptions, Programming.Tags: behaviour, perception, performance, system development

3 comments

…The People!

When you design a system for end users, a good designer/developer considers the “UX” – User eXperience. The system has to be acceptable to the end user. This is often expressed as “easy to use” or “fun” or “Quick”. But in reality, the system can fail in all sort of ways but still be a success if the end user gets something out of using it. I’ve said it before and I’ll say it again and again until I give up on this career. In my opinion:

User Acceptance is the number one aim of any I.T. system.

OK, you all know about UX probably. But what about solutions that have no End Users? I’m thinking about when you create a technical solution or fix for an internal system, to be used by fellow I.T. professionals. How many have you considered the skills and temperament of the people who are going to house-keep the solution you create? I suppose I have had opportunity to think about this more than some of you due to how I work:- I’m a consultant who gets called in to fix things and then leave. At times I have chosen a solution that has been influenced by the people who will be looking after it.

I’ll give you an example. At one site that I worked at for about 9 months, I did a lot of work for one system. The developer/systems administrator who looked after the system was…stupid. I don’t really like saying that, we all vary in our skill set, experience, intelligence, *type* of intelligence (I know some people who can speak 3 languages or know a lot about history but could not wire a plug). But this guy really seemed to struggle with logic, cause-and-effect or learning anything new. And I had to help him look after this database application with one main, huge, hulking table. It had to be partitioned, those partitions maintained and the data archived. I implemented the partitioning, I explained partitions to him several times, what was needed to maintain them, where to look in the data dictionary for information. It was like talking to my mum about it. He just seemed not to understand and his efforts to code something to do what needed to be done were woeful.

I knew it was not me, I’ve run enough training sessions and presented so often that I know I can explain myself (Well, I hope so! Maybe I am deluded). He just was not getting it. Maybe he was in the wrong job. So I wrote him a set of SQL-generating scripts to get him going. He kept messing up running them. In the end, I knew I was about to leave and when I did within 3 months the real customer would have a broken system. So I wrote a mini-application in PL/SQL for him to do what needed to be done. And set it to email a central team if it failed. The team he would call when he broke it all again. I also simplified the solution. My original system had some bells and whistles to help with future changes, such as over-riding where new partitions went or how old ones were compressed. I stripped it out to keep it as simple as possible. I altered the solution to suit the person that would run it.

I’ve done something like this a few times over the years. Usually it is more to do with the skill set of the team as opposed to actual ability. I’ve on occasion worked with people who are new to Oracle and my time is limited so, rather than give them a solution written in PL/SQL that none of them know, I have done so with SQL and cookery instructions/shell scripts. It’s not the best solution but it is something they can live with.

More recently I had to look at fixing the performance of some SQL statements. Baselines would have done the job perfectly. However, the team were all Java experts and had no desire at all to learn about database administration. (To be frank, they had no time to learn either, it was the usual situation of them having 75 hours of work each every week as management thought just shouting would get things fixed, not hiring enough people). I strongly suspected that they would forget about the baselines and if they had a problem they would be confused as to what was going on. So I fixed the key SQL statements with a set of hints to force both the overall structure of the execution plans as well as which indexes to use etc – and said over and over and over and over that if they ever changed indexes or migrated to a later version of Oracle, those hints would need reviewing. They were, in effect, part of their code base. A big advantage of the hints was that they would see them in their code and it would remind them what had been done. They seemed happy with that.

My point is, sometimes the “best” solution is not the correct one, even when you are keeping within the walls of the computing department(s). Sometimes you need to think about who you are giving the solution to and change the solution accordingly.

Friday Philosophy – “Technical Debt” is a Poor Term. Try “Technical Burden”? June 30, 2017

Posted by mwidlake in database design, development, Friday Philosophy, Management.Tags: Architecture, design, system development

5 comments

Recently my friend Sabine Heimsath asked a few of us native English speakers what the opposite of “technical debt” was. My immediate reaction was to say:

I’d say (sarcastically) “proper development” or “decent designer” or even “what we did 25 bloody years ago when we were allowed to take pride in the software we created!”

But my next comment was less reactive and more considered. And that was to say that I did not like the phrase “Technical Debt”:

A debt is when you owe something to someone, to be paid back. You do not owe anything to someone when you build poor systems, you are actually creating a “technical burden” – something those in the future will need to live with and may eventually have to sort out. Those who created the bad app or design will probably not be the ones fixing it – as in paying the debt.

That is of course not always true. Some of us have had to end up fixing a poor solution that we implemented – usually implemented despite our protestations that it was a daft thing to do. But the usual case is that a badly thought-out solution is implemented in a rush, with little design, or with inadequate testing, because of a management pressure to be “agile” or “fast moving”. And it is done with cheap or over-stretched resource.

Also, “technical debt” to me sounds too organised and too easy to fix. If you have a financial debt, you simply pay it back with some interest. In almost all situations I have seen where there is a “technical debt”, the interest to pay – the extra effort and time – is considerably more than was saved in the first place. Sometimes it is more than the original cost of the whole project! Loan Shark territory.

When the poorly designed/implemented system falls over in a heap sometimes the hard-pressed local staff lack the skills or bandwidth to fix it and “Experts” are called in to sort it out. And part of the time taken to fix it is the expert going “why in f**k did you ever think this was a good idea?” (Maybe using better terminology, but that is what they mean!). Being more serious, sometimes the largest slice of time is when as an “Expert” you have to persuade the people who own this mess that it really does need sorting out properly, not just another quick hack – and it really will take much , much more effort than what they originally saved by deciding to implement this fast & dirty. Sorry, I mean “lean & mean”.

This situation often has a secondary impact – it makes the people who originally implemented the solution look poor. And that may or may not be fair. I’ve seen many cases where the original staff (including myself) were forced to do things they did no like by timing constraints, lack of budget or simply the ridiculous demands by someone higher up the organisation who thought simply shouting and demanding would make good things happen. They don’t, you end up creating a burden. Though I have also seen poor solutions because the original team were poor.

I think at the moment a lot of what is called “systems development” is more like a desperate drive to constantly cut corners and do things quicker. If it goes wrong, it’s just a debt, we pay it back. No, no it is not. It’s often a bloody mess and a Burden for years. I keep hoping that, like many things in I.T. this will be a phase we can cycle out of and back into doing proper planning and implementation again. Yes, anything that speeds things up without losing planing/design is great. And if you have the skills, you can do proper Agile, designing the detail as you go – IF you have the general over-arching design already in place. But I wish there was more consideration of the later cost of quick & dirty.

So what was the term Sabine wanted? Well, I can’t speak for her, I am not 100% sure what she was looking for. But from my perspective, we should not say “Technical Debt” but “Technical Burden”. And the opposite might be “technical Investment”. You spend a bit of time and effort now in considering how you can create a solution that can expand or is flexible. I know from my own personal experience that it is when you are given the chance to do those things that you provide a solution that last and lasts and lasts. Only when I have been allowed to properly consider the business need do I create something still used in 10 years. Or 15. Or maybe even 20. That might need some checking!

So, if you really want to build systems to support a successful business, and not a short-lived flash, perhaps you should be saying something like:

You are asking me to create a Technical Burden. Would you rather not help me create a Technical Investment?

If anything else, you might at least be adding a new entry to “Buzzword Bingo”.

Friday Philosophy – When Tech Fails to Deliver, is it Always a Problem? December 9, 2016

Posted by mwidlake in Architecture, development, ethics, Friday Philosophy.Tags: behaviour, knowledge, system development

11 comments

I nipped out to the local supermarket this lunch time to get stuff. I use one of those self-use barcode scanners to log all the goods I put in my basket (apart from the bottle of whisky I was stealing). I then go to the payment machine, scan the “finish shopping” barcode and try to pay. I can’t pay.

I can’t pay as I bought some paracetamol (note to US readers, you know it as acetaminophen). It turns out you need to be 12 to buy paracetamol. Fair enough, but why did I have to stand there and waste 30 seconds of my life before the assistant for the area noticed and came over? She had to uses her special device to access the permissions screen, check I was 12 (the greying beard helps) and authorise it.

I asked why I had to wait. “So I can ensure you are old enough – the machine does not know.” But it does! Or at least it should. I’m using their self-scan service for which I have to be registered. They know my name, address, age, hair colour and inside leg measurement. The system knows I am old enough. Plus I have to pay with a credit/debit card (no cash option with this system). You can’t have a credit card until you are 18 in the UK so by using one of them it knows I am old enough to buy the pills – and even the bottle of whisky I was stealing. And when you use any card, it checks your details. So if I was using a debit card it could check my age at that point and stop me when it makes the check. It’s possible and should be done!

The assistant had wandered off long before I finished making this logical case. I was just an annoying customer and she’d done what I needed her to do. But it really annoyed me – it is possible for the system to check me using technology and the data at hand, and not make me wait. The problem is, they were too lazy to build this limited smarts into the system!

There is a lesson here. And that lesson is this – I should stop being such a self-centred, argumentative and miserable old sod. Firstly, I had to wait 30 seconds (and I am probably exaggerating that). Big deal, I had hardly been inconvenienced and it was a lot quicker than going to a normal till. Secondly, the assistant can’t do anything about the software behind the system. I mean, many of us spend our lives working on computer systems and often we can’t make any changes. Thirdly, I am aware that some parents give their children their credit card & number (the idiots!) so even though it is illegal to do this, the result is there a lot of people under the age of credit who have the means to pay for dangerous things (booze, cigarettes, paracetamol, knives, DIY expanding foam, ‘Viz’ magazine…).

Just because something is possible with the data to hand, sometimes it is not really worth much effort to make it happen.

And sometimes, although it seems logical & sensible given all the parameters (they have my info, no one but me should be using that card) in the real world those rules and data associations are not reliable. There is no enforced RI on our lives, at best there is a set of intended/encouraged limits. A person checking my age is way more reliable than some algorithm in this case.

So next time I whine about waiting 30 seconds in the queue, I hope the assistant just gives me a withering look and tells me to grow up.

I also hope they do not check my basket for un-scanned booze.

(* Just for the record, everything about the whisky was untrue. It was gin).

((And being serious, such system prevent fraud by 2 methods.

The first is that 1 in X times you get re-scanned. The assistant has no idea if they scan anything you had not and this is on purpose – so there is no scene in the shop. But the comparison is made and recorded, for further action.

The second is that apparently they can spot likely cheats just by the data you give them when you sign up and your spending habits. Now that is ‘Big Data Analysis’.

))

Why oh Why Do We Still Not Have a Fast Bulk “SQL*Unloader” Facility? December 1, 2016

Posted by mwidlake in Architecture, database design, performance.Tags: Architecture, behaviour, design, system development

6 comments

Way back in 2004 I was working at the UK side of the Human Genome project. We were creating a massive store of DNA sequences in an Oracle database (this was one of two world-wide available stores of this information, for free & open use by anyone {* see note!}). The database was, for back then, enormous at 5-6TB. And we knew it would approx double every 12 months (and it did, it was 28TB when I had to migrate it to Oracle 10 in 2006, over 40TB 6 months later and grew to half a petabyte before it was moved to another organisation). And were contemplating storing similar massive volumes in Oracle – Protein, RNA and other sequence stores, huge numbers of cytological images (sorry, microscope slides).

Significant chunks of this data were sometimes processed in specialist ways using Massively Parallel Processing farms (hundreds or thousands of compute nodes so we could do “2 years of compute” in a day or even a few hours). Oracle was never going to be able to support this within the database.

But Oracle had no fast, bulk data unloading offering, a “SQL*Unloader”. Other RDBMSs did. And that gave me an issue. I had to argue as to why hold this data in Oracle where it was hard to yank out chunks of it rather than keep it in say a simple file store or MySQL?

That year I was invited to Oracle Open World and I was given a slot with a senior VP in the RDBMS/OCFS area (as that is what were using – I know! But we were). I had been warned by someone that he could be a little “robust”. In fact my friend called him “Larry’s Rottweiler”. However my chat with the SVP went fine. Until he asked me for the one thing I would really want and I said “well, it’s been promised for the last 2 or 3 versions of Oracle, but it still does not exist in V10 – I’d like a SQL*Unloader so we can rapidly extract bulk data from Oracle into a structured file format”. He went very cold. I continued: “We need to do really intensive processing of the data with ‘C’ and if it is locked into the database we can’t do that. It’s hard for me to justify using Oracle as our primary data store if the data is now stuck there…”

He went ballistic. He was furious, he was raising his voice, leaning forward, I honestly thought he might hit me. I can’t remember the whole rant but one line stuck: “We will NEVER help you get your data out of Oracle!”. I ended the discussion as soon as I could – which obviously suited him fine also.

And here we are over 10 years later and no SQL*Unloader has appeared. And, as far as I can see, there is no longer even a suggestion that “one day” one will exist. You can obviously get data out of Oracle using SQL*Plus, SQLcl or PL/SQL’s UTL_FILE. But you have to write your own code and to make it parallel or fast is not that simple. And there are some commercial tools out there to do it. But fundamentally we do not have a simple, robust & fast data unloader tool within the Oracle toolset.

But I remain mystified as to why Oracle Corp will not provide a tool to do this. I can see the argument that doing so would help you migrate your Oracle Data set to a different platform and thus move away from Oracle, but I think that is a dumb argument. If you are willing to dump your Oracle environment for something else you are already not happy – and making life difficult is only going to make you less happy and almost certain to never come back! It’s incredibly inconvenient to extract all your data at present but compared to the cost to your organisation of changing a central part of your infrastructure, it’s just a bloody annoyance. It’s like domestic service providers (telephone, internet, TV) that make it easy for you to sign up but a nightmare to leave. Thus guaranteeing that once you leave (cos you will) you will certainly NOT be going back to them in a hurry!

So for the sake of what I see as a misplaced protectionist stance they close the door to rapidly extracting data from Oracle databases for processing in other ways. I’ve come across other situations like this, before and since, but the Human Genome issue threw it into sharp relief for me. The end result I’ve seen a few times (and effectively what we had at the Sanger) is the data gets stored at least twice – once in Oracle and then somewhere else where it is easy to access. Now that’s a daft and bad place to be, multiple copies and one of them at least lacking RI. Thanks for forcing that one on us Oracle.

Something that is changing is Cloud. Oracle wants us all to move our data and systems into the sky. Their whole marketing message at the moment is nothing but cloud-cloud-cloud and they give the impression that cloud is in fact everything, every solution. So how do you get 10TB of data into the cloud? It’s not so hard really. You can trickle it in over time, after all networks are constantly getting faster, but for bulk data this is a poor solution. Or you can ship your data physically. You can’t beat the bandwidth of a transit van. I’ve heard Oracle people at OOW and other conferences saying this is easy-peasy to arrange (as it should be, I’ve done it for clients doing migrations a couple of times).

But how are you going to get it out again? How well do you think that a company that has never provided SQL*Unloader is going to help you get bulk data back out of your Oracle cloud-based databases into on-premises systems? And how well are they going to help you shift it from one of their systems to another cloud system? One they have not sold you? Sometimes people talk about business relationships being like a marriage. Well once you gave gone cloud, you might have ensured any divorce is going to be really messy!

Update – see the comment by Noons on the fact that VLDBs and Data Warehouses are often not as static as you think – so there can be a constant need to move a lot data into and out of such very large data stores. Cloud is not currently going to support this well. And if you have a data life-cycle management policy, say archiving off data 3+ years old. Where are you archiving it off to and how will you do this once it is in the web?

* Please note, this was not human DNA sequence information gathered for medical reasons! It was mostly sequence information for other organisms (chimps, flies, potatoes, rice, viruses, bacteria all sorts) generated by the scientific and, in some cases, biopharma community. There was human DNA sequence in there but by consent and anonymised – you can’t have a human genome sequence without involving some humans! We did not hold your Dodgy Uncle’s DNA test that was taken for that court case…

Friday Philosophy: Be A Hero – OR Be The Best August 26, 2016

Posted by mwidlake in Friday Philosophy, humour, Perceptions, working.Tags: behaviour, Humour, perception, system development

19 comments

There is a crisis! The database is not responding, the apps can’t work and the business is suffering. Management are doing what management are there for – panicking and demanding “Someone Do Something!!!”.

Step forward a DBA who logs into the server, checks the alert logs, spots what is wrong and fixes it. The database starts processing requests, the applications are all working fine and the business is back on track. What a hero!

Such situations are not just the preserve of the database and the DBA of course. You get the hero System Administrators who step in and sort out the lack of storage space at 3am. Or the programmers who look at the code that has been running slow for weeks, that others have not been able to fix, and make it run in 5 minutes rather than 5 hours. All heroes who then bask in the gratitude of management and colleagues. Thank goodness for the Hero Developer/DBA/Sys Admin or whatever. You even get articles and advice on how to be The Hero in some quarters. I’ve even seen job ads like “Are YOU our next Developer Hero?!?”.

Only, 9 times out of 10, whatever was wrong should never have occurred. Yes, there are always going to be hard-to-predict failures or unavoidable catastrophes. But the majority of situations I have seen when the database goes seriously wrong, a critical program messes up badly, or a server goes offline, it is down to something that could and should have been spotted before hand – or never set up in the poor manner that it has been. These are things like Archive Redo log areas filling up, an “innocuous” network tweak taking out a major connection or a data processing program that goes wrong if it is run with no data to process. Just a little bit of thought or testing will avoid these sorts of issues.

As you get better at your role, and I mean really, truly better and not just older, you learn about better ways to do things. Either you make mistakes yourself and have to fix them (the best way to learn, even though it does not often feel like it), correcting something someone else did poorly or you read about how to set up systems to be more fault tolerant. You become more experienced with the tools and you grab hold of any new features that are going to make the systems run better. I’d hope we also all learn skills and working practices that help avoid disasters, such as proper testing methodologies (something that we seem to get less and less time & resource for) and proactive rather than reactive monitoring of our systems. If I am owning a database and it unexpectedly runs out of space for the data files or archive redo – I failed. The database did not, I did – as I know how to set up checks for those things.

The best technicians (in my opinion) that I have worked with are all like this. They don’t monitor for things that have gone wrong so much as monitoring for things that are going wrong. Every week or month they will change something that was OK – but it could be better, more resilient. The end result is a much quieter life and a substantially better service provided to the business.

But that’s where the rub is. That’s where things become unfair. When you are being the Best DBA or the Best Developer, things just work without a fuss. There are no disasters that impact the business and thus no need for The Hero. The systems run smooth & fast and management figure you are probably not doing that much. Heck, you seem to be spending all your time tinkering rather than fixing stuff! They often don’t get that the “tinkering” is what stops the disasters and the need for Heroes. That can lead to a lack of appreciation for what you are doing and it is extremely hard to see someone get praise for fixing an issue that they should never have let happen and even getting a pay rise and you get just a “yeah, thanks for, like, keeping the lights on I guess”.

I had this in spades in one role. I turned up and the critical databases would all be going down once or twice a week. People just accepted it. I worked on the problems, got my team together (and trained them!) and improved the service. For a couple of years I was a card-carrying member of the cape and spandex pants club. I was a Hero. We provided more services and incidents became very rare. And then they decided I was not doing enough. No problems were occurring so what did they need me for? After I calmed down from that (it took a few months) I decided I agreed with them and left. But I left behind a fantastic team and rock-solid systems. {It actually took me years to stop resenting the way they handled it, to be fair, but I never stopped being proud of what I did and that team}.

So what do you do when you are being the best you can and not the hero and, as a result, you are fading into the woodwork? Well, I advise people to do several things, some of which you can see from a slide (taken from my “disasters” presentation) shown to the left. Record the number of incidents and how they go down as you improve things. Document improved up-time and better performance (which might be the same response time under higher workloads). Generally blow your own trumpet. However, it never seems to be enough to counteract the prestige people get from being the hero. It’s not “Right” but it just seems to be the way it is. I know some people take the other approach, which is to actually let (or even create?) disasters in which they can be heroes. After all, this is your career.

One fix is to just move on. After all, in the situation I described above I had actually completed my job – I had been hired to put in place a professional service and I did. So it would have been best if we had all been grown up and decided it was job done and time for me to move on. As a contractor/consultant this is a lot easier to do. Turn up at a client, be a hero for a while and then do your real job of making the systems solid. And then move on.

But not everyone has that luxury to move on. There may be few opportunities where you live or you would lose other aspects to your job that are very important (good child care is one example I have seen). Or moving roles might be something that gives you a lot of stress. So if you are “stuck” in your role and you are doing the best that you can, it is massively demoralising to fade into the woodwork as a result. What is the reward for all your work – pride and less interrupted nights are good but not getting the credit you deserve is hard.

But in the end I think you have a choice. Be a Hero or be The Best You Can Be. I have to aim for the latter, I can’t knowingly allow disasters without trying to at least warn management it could happen. And if you decide to be the best you can be perhaps you have to accept that, unless your management is very unusual, it may well mean less respect than you deserve. But *you* will know. I suppose it is a pride thing.

Are you a Hero? Or are you Simply The Best!

Friday Philosophy – The Singular Stupidity of the Sole Solution April 22, 2016

Posted by mwidlake in Architecture, Exadata, Friday Philosophy, Hardware.Tags: Architecture, perception, rant, system development

13 comments

I don’t like the ‘C’ word, it’s offensive to some people and gets used way too much. I mean “cloud” of course. Across all of I.T. it’s the current big trend that every PR department seems to feel the need to trump about and it’s what all Marketing people are trying to sell us. I’m not just talking Oracle here either, read any computing, technical or scientific magazine and there are the usual adds by big I.T. companies like IBM and they are all pushing clouds (and the best way to push a cloud is with hot air). And we’ve been here before so many times. It’s not so much the current technical trend that is the problem, it is the obsession with the one architecture as the solution to fit all requirements that is damaging.

When a company tries to insist that X is the answer to all technical and business issues and promotes it almost to the exclusion of anything else, it leads to a lot of customers being disappointed when it turns out that the new golden bullet is no such thing for their needs. Especially when the promotion of the solution translates to a huge push in sales of it, irrespective of fit. Technicians get a load of grief from the angry clients and have to work very hard to make the poor solution actually do what is needed or quietly change the solution for one that is suitable. The sales people are long gone of course, with their bonuses in the bank.

But often the customer confidence in the provider of the solution is also long gone.

Probably all of us technicians have seen it, some of us time after time and a few of us rant about it (occasionally quite a lot). But I must be missing something, as how can an organisation like Oracle or IBM not realise they are damaging their reputation? But they do it in a cyclical pattern every few years, so whatever they gain by mis-selling these solutions is somehow worth the abuse of the customer – as that is what it is. I suppose the answer could be that all large tech companies are so guilty of this that the customer end up feeling it’s a choice between a list of equally dodgy second hand car salesemen.

Looking at the Oracle sphere, when Exadata came along it was touted by Oracle Sales and PR as the best solution – for almost everything. Wrongly. Utterly and stupidly wrongly. Those of us who got involved in Exadata with the early versions, especially I think V2 and V3, saw it being implemented for OLTP-type systems where it was a very, very expensive way of buying a small amount of SSD. The great shame was that the technical solution of Exadata was fantastic for a sub-set of technical issues. All the clever stuff in the storage cell software and maximizing hardware usage for a small number of queries (small sometimes being as small as 1) was fantastic for some DW work with huge full-segment-scan queries – and of no use at all for the small, single-account-type queries that OLTP systems run. But Oracle just pushed and pushed and pushed Exadata. Sales staff got huge bonuses for selling them and the marketing teams seemed incapable of referring to the core RDBMS without at least a few mentions of Exadata

Like many Oracle performance types, I ran into this mess a couple of times. I remember one client in particular who had been told Exadata V2 would fix all their problems. I suspect based solely on the fact it was going to be a multi-TB data store. But they had an OLTP workload on the data set and any volume of work was slaying the hardware. At one point I suggested that moving a portion of the workload onto a dirt cheap server with a lot of spindles (where we could turn off archive redo – it was a somewhat unusual system) would sort them out. But my telling them a hardware solution 1/20th the cost would fix things was politically unacceptable.

Another example of the wonder solution is Agile. Agile is fantastic: rapid, focused development, that gets a solution to a constrained user requirement in timescales that can be months, weeks, even days. It is also one of the most abused terms in I.T. Implementing Agile is hard work, you need to have excellent designers, programmers that can adapt rapidly and a lot, and I mean a LOT, of control of the development and testing flow. It is also a methodology that blows up very quickly when you try to include fix-on-fail or production support workloads. It also goes horribly wrong when you have poor management, which makes the irony that it is often implemented when management is already failing even more tragic. I’ve seen 5 agile disasters for each success, and on every project there are the shiny-eyed Agile zealots who seem to think just implementing the methodology, no matter what the aims of the project or the culture they are in, is guaranteed success. It is not. For many IT departments, Agile is a bad idea. For some it is the best answer.

Coming back to “cloud”, I think I have something of a reputation for not liking it – which is not a true representation of my thoughts on it, but is partly my fault as I quickly tired of the over-sell and hype. I think some aspect of cloud solutions are great. The idea that service providers can use virtualisation and container technology to spin up a virtual server, a database, an application, an application sitting in a database on a server, all in an automated manner in minutes, is great. The fact that the service provider can do this using a restricted number of parts that they have tested integrate well means they have a way more limited support matrix and thus better reliability. With the Oracle cloud, they are using their engineered systems (which is just a fancy term really for a set of servers, switches, network & storage configured in a specific way with their software configure in a standard manner) so they can test thoroughly and not have the confusion of a type of network switch being used that is unusual or a flavor of linux that is not very common. I think these two items are what really make cloud systems interesting – fast, automated provisioning and a small support matrix. Being available over the internet is not such a great benefit in my book as that introduces reasons why it is not necessarily a great solution.

But right now Oracle (amongst others) is insisting that cloud is the golden solution to everything. If you want to talk at Oracle Open World 2016 I strongly suspect that not including the magic word in the title will seriously reduce your chances. I’ve got some friends who are now so sick of the term that they will deride cloud, just because it is cloud. I’ve done it myself. It’s a potentially great solution for some systems, ie running a known application that is not performance critical that is accessed in a web-type manner already. It is probably not a good solution for systems that are resource heavy, have regulations on where the data is stored (some clinical and financial data cannot go outside the source country no matter what), alter rapidly or are business critical.

I hope that everyone who uses cloud also insists that the recovery of their system from backups is proven beyond doubt on a regular basis. Your system is running on someone else’s hardware, probably managed by staff you have no say over and quite possibly with no actual visibility of what the DR is. No amount of promises or automated mails saying backs occurred is guarantee of recovery reliability. I’m willing to bet that within the next 12 months there is going to be some huge fiasco where a cloud services company loses data or system access in a way that seriously compromises a “top 500” company. After all, how often are we told by companies that security is their top priority? About as often as they mess it up and try to embark on a face-saving PR exercise. So that would be a couple a month.

I just wish Tech companies would learn to be a little less single solution focused. In my book, it makes them look like a bunch of excitable children. Give a child a hammer and everything needs a pounding.